— Video of talk; written summary of debate by Karen Asp

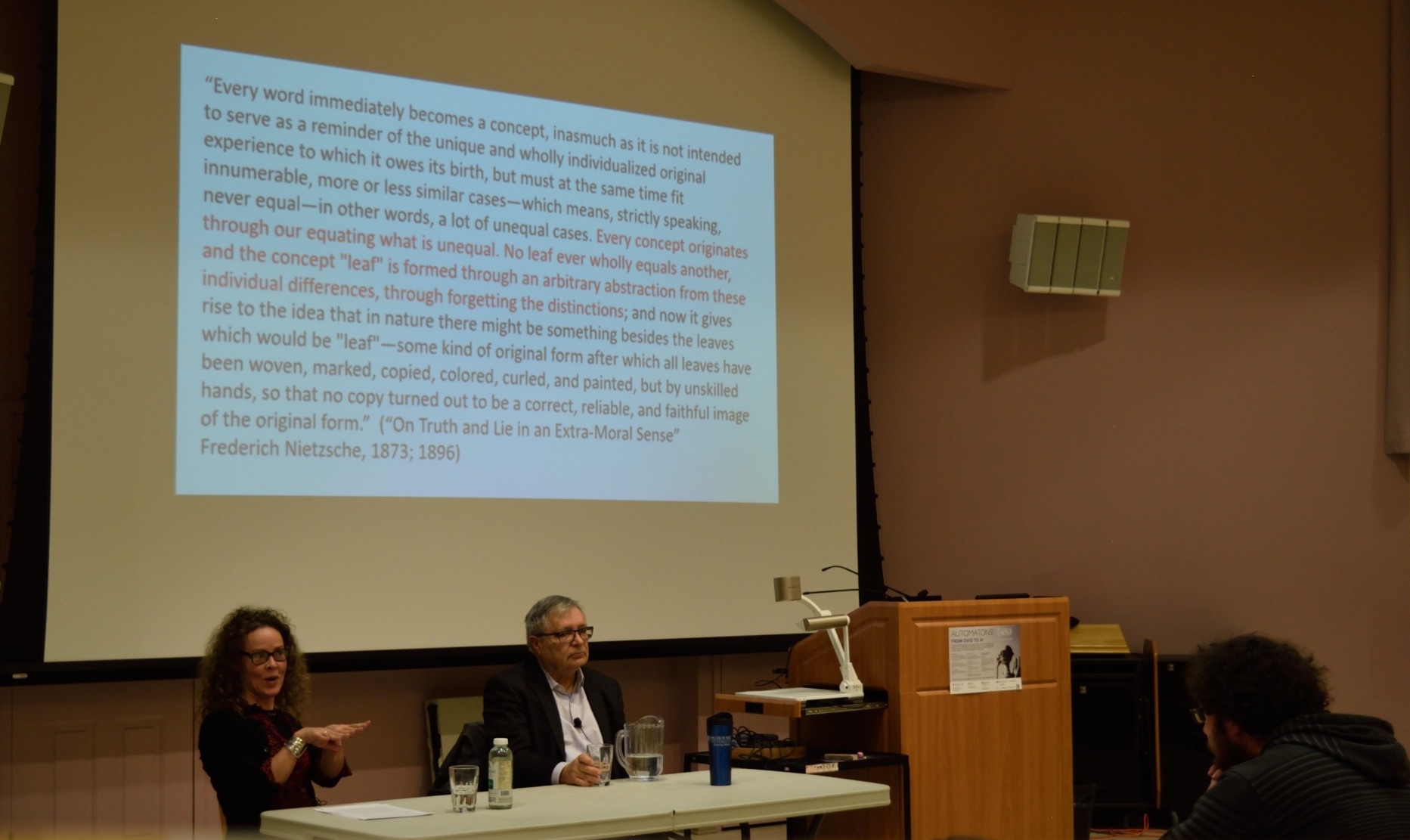

Divergent perspectives on recent developments in artificial intelligence (AI) and robotics were evident at a public lecture hosted last week (Feb 14, 2018) at the University of King’s College (Halifax). The keynote speaker, Stan Matwin, presented a nuanced, but largely optimistic view of where research in AI is heading, and the value that advances like “deep learning” promise for society. Following Dr. Matwin’s talk, Teresa Heffernan offered some critical commentary, emphasizing how deceptive claims were being used to promote initiatives in the industry, feeding on and reinforcing collective fantasies and delusional thoughts about, for example, the “aliveness” of robots.

Dr. Matwin (Dalhousie University, Canada Research Chair in Visual Textual Analytics), summarized the history of AI and characterized advances in the field since the advent of “deep learning.” He explained how “classical” machine learning depended on human labour for the provision of “representations” and “examples” comprising the “knowledge” being imparted to the “artificial” system. In contrast, Dr. Matwin showed, the emerging field of “deep learning” requires human effort in the compilation of “examples” only; the machine “learns” what the examples represent by “finding non-linear relationships between” them. Moreover, according to Dr. Matwin, 2017 saw the development of a self-training chess playing program (AlphaZero) able to learn the game without humans supplying either “representations” or “examples.” Based on the powers exhibited by “deep learning” systems, Dr. Matwin predicted that significant social changes were on the horizon, such as white-collar job loss. Nonetheless he averred that the ubiquity of high-tech machines like smart phones was indicative of the inevitability of technological progress in general, and of the benefits of AI in particular.

Dr. Heffernan, professor of English at Saint Mary’s University and director of the Social Robot Futures Project, introduced her talk by showing a segment from the Tonight Show (Jimmy Fallon) in which the CEO of Hanson Robotics (David Hanson) affirmed that his robot “Sophia” was “basically alive.

Dr. Heffernan then argued that “Sophia” was, in fact, merely a “chatbot” in a robotic body: it functions by reacting to a user’s statements, queries and expressions with prewritten scripts, and/or information gathered from the internet. The user’s spoken statements are transcribed into text that is then matched with automated replies. Dr. Heffernan presented some of the open source code used in programming Sophia’s chatbot capabilities. In sum, she argued with reference to CEO Hanson’s performance on the Tonight Show, that “what you are watching is a showman and an impressive spectacle.”

Dr. Heffernan explained how the scientist who invented the “chatbot” concept in the 1960s, Joseph Weisenbaum, had since become a critic of the industry. In a famous experiment, he programmed the chatbot “Eliza” with a dialogic model based in psychotherapy — giving rise to the so-called “DOCTOR” script. Weisenbaum noticed that although users fully understood how the DOCTOR-scripted chatbot worked, which was to respond with stock phrases or pick up on the last statement the subject made, they nevertheless divulged intimate personal details and attributed feelings to it. Regarding this phenomenon, Dr. Heffernan quoted Weisenbaum’s own reflections: “What I had not realized is that extremely short exposures to a relatively simple computer program could induce powerful delusional thinking in quite normal people.” In a documentary called Plug and Pray Weizenbaum expressed his concern about the development of this technology given people’s susceptibility to being manipulated.

Nothwithstanding Weisenbaum’s public statements on these issues, according to Dr. Heffernan, contemporary “marketers of social robots like Sophia, which are now enhanced by faster computer processors and access to big data sets, encourage this delusional thinking instead of exposing it.”

You can watch Dr. Heffernan’s 15 minute talk here. More information about the “Automatons” lecture series at King’s College can be found here.